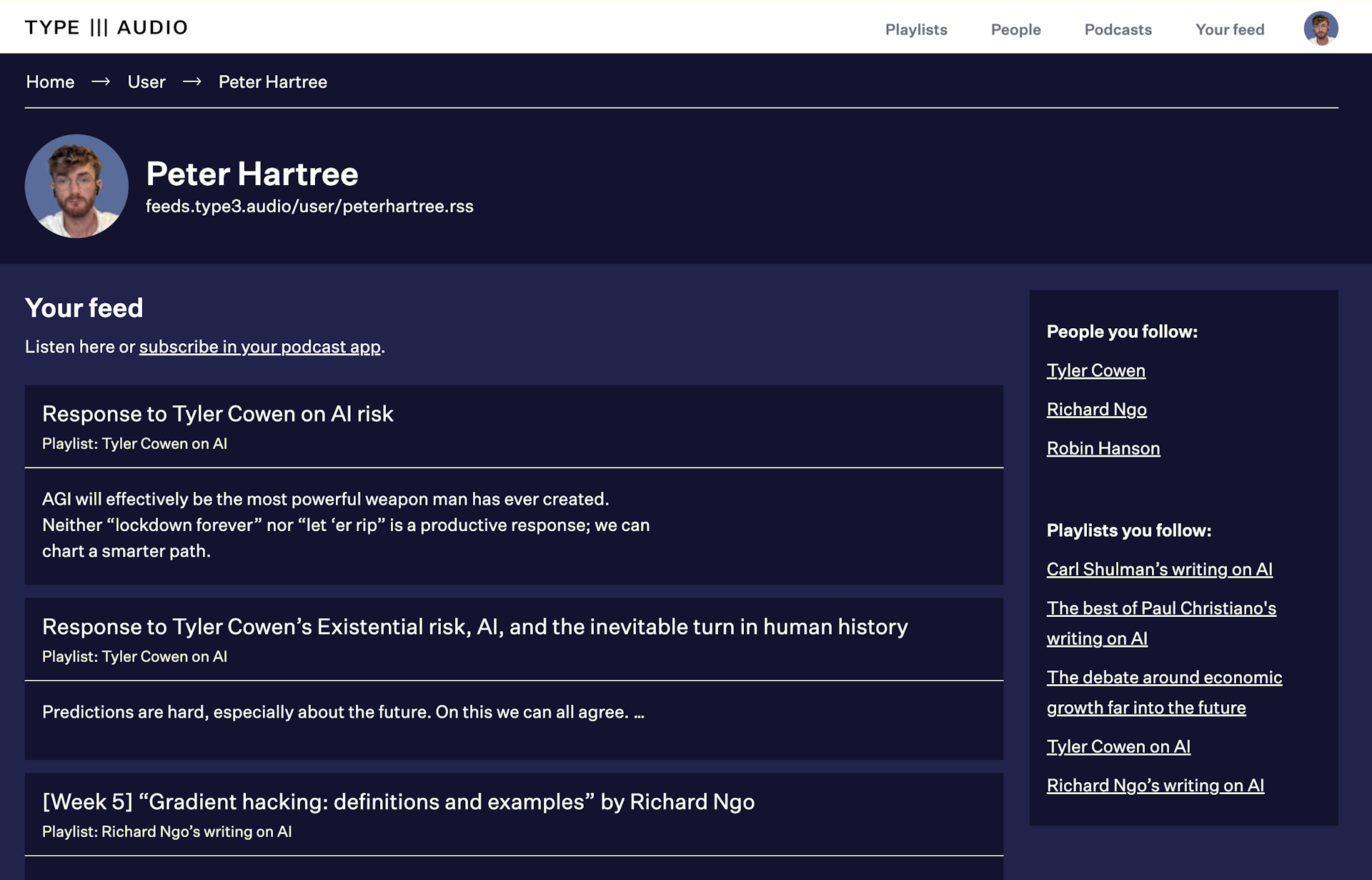

Welcome to the alpha release of TYPE III AUDIO.

Expect very rough edges and very broken stuff—and regular improvements. Please share your thoughts.

[Week 4] "Supervising strong learners by amplifying weak experts" by Paul Christiano, Buck Shlegeris & Dario Amodei

arxiv.org

AI Safety Fundamentals: Alignment

Readings from the AI Safety Fundamentals: Alignment course.

Abstract:

Many real world learning tasks involve complex or hard-to-specify objectives, and using an easier-to-specify proxy can lead to poor performance or misaligned behavior. One solution is to have humans provide a training signal by demonstrating or judging performance, but this approach fails if the task is too complicated for a human to directly evaluate. We propose Iterated Amplification, an alternative training strategy which progressively builds up a training signal for difficult problems by combining solutions to easier subproblems. Iterated Amplification is closely related to Expert Iteration (Anthony et al., 2017; Silver et al., 2017), except that it uses no external reward function. We present results in algorithmic environments, showing that Iterated Amplification can efficiently learn complex behaviors.

Original text:

https://arxiv.org/abs/1810.08575

Narrated for AI Safety Fundamentals by Perrin Walker of TYPE III AUDIO.